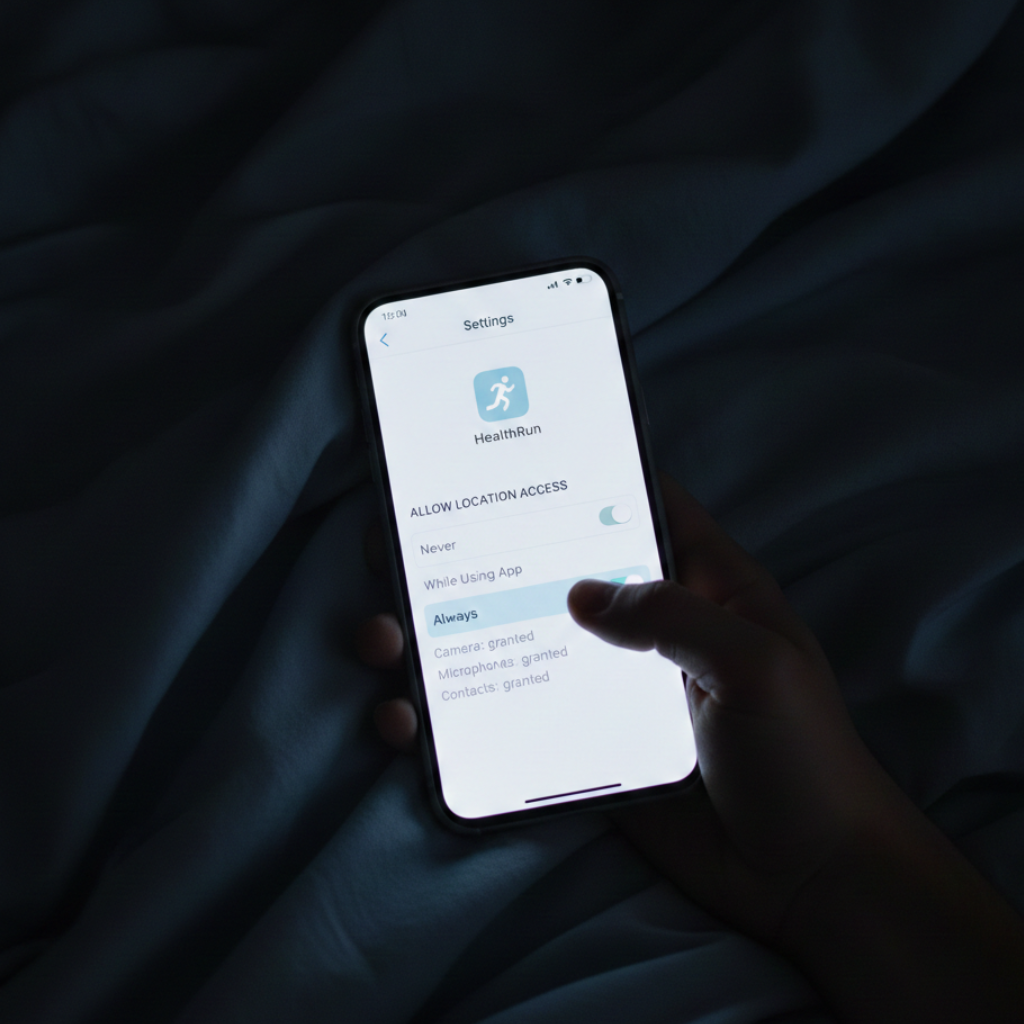

Eleven PM. I’m lying in bed, thumb scrolling through iPhone settings, when I see it glowing in that clinical sans-serif: Always. Not “While Using App.” Not “While Running.” Always. Every minute I sleep. Every shower. Every argument with my wife. The fitness app I downloaded to count steps doesn’t track my runs—it tracks my life. I tap the toggle off, hands suddenly cold. Hadn’t I only agreed to map my Saturday morning routes? I scroll down. Camera: granted. Microphone: granted. Health data: granted. Contacts: granted. Each permission was a door I’d opened without reading the sign. This isn’t a fitness app I’m holding. It’s a surveillance device I invited in, fed my most intimate data, and carried for three years in my pocket, warm against my thigh

I remember granting these permissions. January 2022—the hangover, the resolution, the optimism. Get healthy this year. Isn’t that what we all say? The app asked to track my location “to map your runs and show you your route.” Reasonable. Helpful, even. A digital running partner. But I don’t remember the word Always. I don’t remember agreeing to surveillance while I sleep. While I sit in the doctor’s office waiting for test results. While I write in coffee shops, thinking I’m alone with my thoughts. And I certainly don’t remember consenting to hand my camera and microphone to an app whose business model I never questioned. Why would I? It was free.

So I start digging. Privacy researchers. Federal Trade Commission documents. Legal experts who spend their careers untangling what “free” actually costs. What I find makes Always On location tracking look quaint. Menstrual cycles. Pregnancy intentions. Mental health struggles are logged in therapy apps. Sexual orientation inferred from usage patterns. Your precise GPS coordinates—not “within a city block,” but accurate to within a few meters, timestamped, creating a map of everywhere you’ve been for years. Heart rate variability that reveals stress levels. Sleep patterns that signal depression. Gait abnormalities that suggest early Parkinson’s. And here’s what makes my stomach drop: all of it is linked to my name. My email. My device identifier never changes unless I buy a new phone. Three fitness apps in the Surfshark study collect what researchers classified as “very sensitive information”—racial background, sexual orientation, pregnancy details, disability status, religious beliefs, political opinions, genetic data, and biometrics. I check my phone. I have two of them. In 2013, data brokers sold lists of “rape sufferers” for 7.9 cents per name. Twelve years ago—before smartphones were everywhere, before we started voluntarily logging our most intimate health details into apps we thought were private. A Duke study later found active markets for people with depression, anxiety, bipolar disorder, and ADHD. Lists of the vulnerable. Lists of us. The market didn’t disappear. It got sophisticated. set down my phone. The Always On toggle still glows. I thought I was tracking my health. Turns out, I was the one being tracked.

But how? How does an app that tracks my morning jog know my mental health history, my sexual orientation, that I searched for antidepressants at 2 AM last Thursday? I thought the tracking was simple. Tap “Start Workout,” and the app logs my route and stores it on my phone. I use the app; it helps me, and my data stays mine. A transaction. Contained. When I learn about SDKs. Software Development Kits—pre-packaged code that developers plug into apps like LEGO blocks. Except instead of building towers, they’re building something else. One SDK from Facebook for social sharing. Another from Google for analytics. A third from AppsFlyer to track you across multiple apps. Each SDK is its own program, running invisibly inside the fitness app. Each one collects data. Each one transmits it home. The app developer often doesn’t fully understand what’s being taken. The FTC calls them “automatic ‘plug and play’ tracking pixels designed to grab a substantial amount of consumer data and turn it over for advertising purposes.” That language appears in their complaint against GoodRx—a prescription discount app that promised users it would “never share personal health information with advertisers.” But GoodRx had embedded Facebook, Google, and Criteo SDKs. Every time someone searched for HIV medication, antidepressants, or birth control, the SDKs captured it. Linked it to their email, their unique ad ID. Transmitted everything. GoodRx didn’t actively decide to share each search. The SDKs did it automatically. Continuously. Invisibly. Pull up my fitness app’s privacy policy. 10,247 words. I search for “SDK.” It appears once, in section 8.3: “We may use third-party service providers to perform analytics, advertising, and other services on our behalf.” No list of which third parties. No explanation of what they collect. No disclosure that these “service providers” are actually autonomous data extractors operating inside the app I thought I owned. stare at my phone screen. One app icon. But inside it—five companies, ten, sometimes dozens—all collecting simultaneously. All transmitting. I’m not using a fitness app. I’m carrying surveillance software that happens to count my steps.

Coming up… What the FTC Documents Revealed. Next